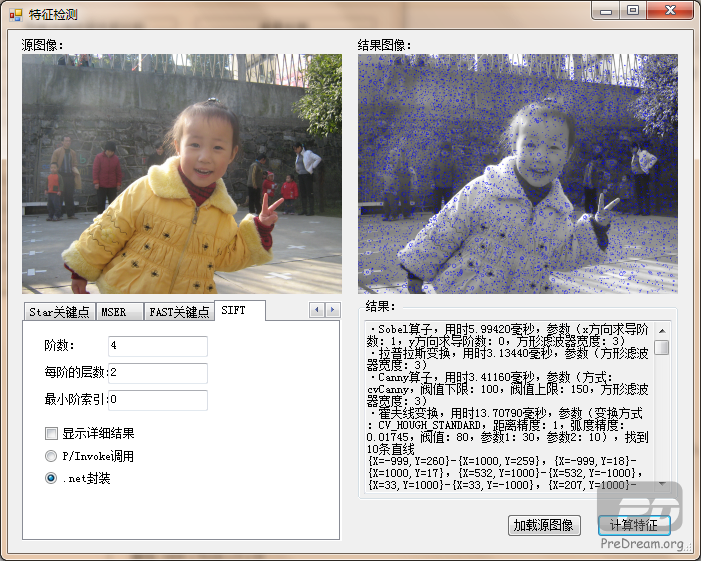

图像特征检测(Image Feature Detection)

作者:王先荣

前言

图像特征提取是计算机视觉和图像处理中的一个概念。它指的是使用计算机提取图像信息,决定每个图像的点是否属于一个图像特征。本文主要探讨如何提取图像中的“角点”这一特征,及其相关的内容。而诸如直方图、边缘、区域等内容在前文中有所提及,请查看相关文章。OpenCv(EmguCv)中实现了多种角点特征的提取方法,包括:Harris角点、ShiTomasi角点、亚像素级角点、SURF角点、Star关键点、FAST关键点、Lepetit关键点等等,本文将逐一介绍如何检测这些角点。在此之前将会先介绍跟角点检测密切相关的一些变换,包括Sobel算子、拉普拉斯算子、Canny算子、霍夫变换。另外,还会介绍一种广泛使用而OpenCv中并未实现的SIFT角点检测,以及最近在OpenCv中实现的MSER区域检测。所要讲述的内容会很多,我这里尽量写一些需要注意的地方及实现代码,而参考手册及书本中有的内容将一笔带过或者不会提及。

Sobel算子

Sobel算子用多项式计算来拟合导数计算,可以用OpenCv中的cvSobel函数或者EmguCv中的Image<TColor,TDepth>.Sobel方法来进行计算。需要注意的是,xorder和yorder中必须且只能有一个为非零值,即只能计算x方向或者y反向的导数;如果将方形滤波器的宽度设置为特殊值CV_SCHARR(-1),将使用Scharr滤波器代替Sobel滤波器。

使用Sobel滤波器的示例代码如下:

//Sobel算子

private string SobelFeatureDetect()

{

//获取参数

int xOrder = int.Parse((string)cmbSobelXOrder.SelectedItem);

int yOrder = int.Parse((string)cmbSobelYOrder.SelectedItem);

int apertureSize = int.Parse((string)cmbSobelApertureSize.SelectedItem);

if ((xOrder == 0 && yOrder == 0) || (xOrder != 0 && yOrder != 0))

return "Sobel算子,参数错误:xOrder和yOrder中必须且只能有一个非零。\r\n";

//计算

Stopwatch sw = new Stopwatch();

sw.Start();

Image<Gray, Single> imageDest = imageSourceGrayscale.Sobel(xOrder, yOrder, apertureSize);

sw.Stop();

//显示

pbResult.Image = imageDest.Bitmap;

//释放资源

imageDest.Dispose();

//返回

return string.Format("·Sobel算子,用时{0:F05}毫秒,参数(x方向求导阶数:{1},y方向求导阶数:{2},方形滤波器宽度:{3})\r\n", sw.Elapsed.TotalMilliseconds, xOrder, yOrder, apertureSize);

}拉普拉斯算子

拉普拉斯算子可以用作边缘检测;可以用OpenCv中的cvLaplace函数或者EmguCv中的Image<TColor,TDepth>.Laplace方法来进行拉普拉斯变换。需要注意的是:OpenCv的文档有点小错误,apertureSize参数值不能为CV_SCHARR(-1)。

使用拉普拉斯变换的示例代码如下:

//拉普拉斯变换

private string LaplaceFeatureDetect()

{

//获取参数

int apertureSize = int.Parse((string)cmbLaplaceApertureSize.SelectedItem);

//计算

Stopwatch sw = new Stopwatch();

sw.Start();

Image<Gray, Single> imageDest = imageSourceGrayscale.Laplace(apertureSize);

sw.Stop();

//显示

pbResult.Image = imageDest.Bitmap;

//释放资源

imageDest.Dispose();

//返回

return string.Format("·拉普拉斯变换,用时{0:F05}毫秒,参数(方形滤波器宽度:{1})\r\n", sw.Elapsed.TotalMilliseconds, apertureSize);

}Canny算子

Canny算子也可以用作边缘检测;可以用OpenCv中的cvCanny函数或者EmguCv中的Image<TColor,TDepth>.Canny方法来进行Canny边缘检测。所不同的是,Image<TColor,TDepth>.Canny方法可以用于检测彩色图像的边缘,但是它只能使用apertureSize参数的默认值3;

而cvCanny只能处理灰度图像,不过可以自定义apertureSize。cvCanny和Canny的方法参数名有点点不同,下面是参数对照表。

Image<TColor,TDepth>.Canny CvInvoke.cvCanny

thresh lowThresh

threshLinking highThresh

3 apertureSize

值得注意的是,apertureSize只能取3,5或者7,这可以在cvcanny.cpp第87行看到:

aperture_size &= INT_MAX;

if( (aperture_size & 1) == 0 || aperture_size < 3 || aperture_size > 7 )

CV_ERROR( CV_StsBadFlag, "" );

使用Canny算子的示例代码如下:

//Canny算子

private string CannyFeatureDetect()

{

//获取参数

double lowThresh = double.Parse(txtCannyLowThresh.Text);

double highThresh = double.Parse(txtCannyHighThresh.Text);

int apertureSize = int.Parse((string)cmbCannyApertureSize.SelectedItem);

//计算

Stopwatch sw = new Stopwatch();

sw.Start();

Image<Gray, Byte> imageDest = null;

Image<Bgr, Byte> imageDest2 = null;

if (rbCannyUseCvCanny.Checked)

{

imageDest = new Image<Gray, byte>(imageSourceGrayscale.Size);

CvInvoke.cvCanny(imageSourceGrayscale.Ptr, imageDest.Ptr, lowThresh, highThresh, apertureSize);

}

else

imageDest2 = imageSource.Canny(new Bgr(lowThresh, lowThresh, lowThresh), new Bgr(highThresh, highThresh, highThresh));

sw.Stop();

//显示

pbResult.Image = rbCannyUseCvCanny.Checked ? imageDest.Bitmap : imageDest2.Bitmap;

//释放资源

if (imageDest != null)

imageDest.Dispose();

if (imageDest2 != null)

imageDest2.Dispose();

//返回

return string.Format("·Canny算子,用时{0:F05}毫秒,参数(方式:{1},阀值下限:{2},阀值上限:{3},方形滤波器宽度:{4})\r\n", sw.Elapsed.TotalMilliseconds, rbCannyUseCvCanny.Checked ? "cvCanny" : "Image<TColor, TDepth>.Canny", lowThresh, highThresh, apertureSize);

}另外,在http://www.china-vision.net/blog/user2/15975/archives/2007/804.html有一种自动获取Canny算子高低阀值的方法,作者提供了用C语言实现的代码。我将其改写成了C#版本,代码如下:

/// <summary>

/// 计算图像的自适应Canny算子阀值

/// </summary>

/// <param name="imageSrc">源图像,只能是256级灰度图像</param>

/// <param name="apertureSize">方形滤波器的宽度</param>

/// <param name="lowThresh">阀值下限</param>

/// <param name="highThresh">阀值上限</param>

unsafe void AdaptiveFindCannyThreshold(Image<Gray, Byte> imageSrc, int apertureSize, out double lowThresh, out double highThresh)

{

//计算源图像x方向和y方向的1阶Sobel算子

Size size = imageSrc.Size;

Image<Gray, Int16> imageDx = new Image<Gray, short>(size);

Image<Gray, Int16> imageDy = new Image<Gray, short>(size);

CvInvoke.cvSobel(imageSrc.Ptr, imageDx.Ptr, 1, 0, apertureSize);

CvInvoke.cvSobel(imageSrc.Ptr, imageDy.Ptr, 0, 1, apertureSize);

Image<Gray, Single> image = new Image<Gray, float>(size);

int i, j;

DenseHistogram hist = null;

int hist_size = 255;

float[] range_0 = new float[] { 0, 256 };

double PercentOfPixelsNotEdges = 0.7;

//计算边缘的强度,并保存于图像中

float maxv = 0;

float temp;

byte* imageDataDx = (byte*)imageDx.MIplImage.imageData.ToPointer();

byte* imageDataDy = (byte*)imageDy.MIplImage.imageData.ToPointer();

byte* imageData = (byte*)image.MIplImage.imageData.ToPointer();

int widthStepDx = imageDx.MIplImage.widthStep;

int widthStepDy = widthStepDx;

int widthStep = image.MIplImage.widthStep;

for (i = 0; i < size.Height; i++)

{

short* _dx = (short*)(imageDataDx + widthStepDx * i);

short* _dy = (short*)(imageDataDy + widthStepDy * i);

float* _image = (float*)(imageData + widthStep * i);

for (j = 0; j < size.Width; j++)

{

temp = (float)(Math.Abs(*(_dx + j)) + Math.Abs(*(_dy + j)));

*(_image + j) = temp;

if (maxv < temp)

maxv = temp;

}

}

//计算直方图

range_0[1] = maxv;

hist_size = hist_size > maxv ? (int)maxv : hist_size;

hist = new DenseHistogram(hist_size, new RangeF(range_0[0], range_0[1]));

hist.Calculate<Single>(new Image<Gray, Single>[] { image }, false, null);

int total = (int)(size.Height * size.Width * PercentOfPixelsNotEdges);

double sum = 0;

int icount = hist.BinDimension[0].Size;

for (i = 0; i < icount; i++)

{

sum += hist[i];

if (sum > total)

break;

}

//计算阀值

highThresh = (i + 1) * maxv / hist_size;

lowThresh = highThresh * 0.4;

//释放资源

imageDx.Dispose();

imageDy.Dispose(); image.Dispose();

hist.Dispose();

}霍夫变换

霍夫变换是一种在图像中寻找直线、圆及其他简单形状的方法,在OpenCv中实现了霍夫线变换和霍夫圆变换。值得注意的地方有以下几点:(1)HoughLines2需要先计算Canny边缘,然后再检测直线;(2)HoughLines2计算结果的获取随获取方式的不同而不同;(3)HoughCircles检测结果似乎不正确。

使用霍夫变换的示例代码如下所示:

//霍夫线变换

private string HoughLinesFeatureDetect()

{

//获取参数

HOUGH_TYPE method = rbHoughLinesSHT.Checked ? HOUGH_TYPE.CV_HOUGH_STANDARD : (rbHoughLinesPPHT.Checked ? HOUGH_TYPE.CV_HOUGH_PROBABILISTIC : HOUGH_TYPE.CV_HOUGH_MULTI_SCALE);

double rho = double.Parse(txtHoughLinesRho.Text);

double theta = double.Parse(txtHoughLinesTheta.Text);

int threshold = int.Parse(txtHoughLinesThreshold.Text);

double param1 = double.Parse(txtHoughLinesParam1.Text);

double param2 = double.Parse(txtHoughLinesParam2.Text);

MemStorage storage = new MemStorage();

int linesCount = 0;

StringBuilder sbResult = new StringBuilder();

//计算,先运行Canny边缘检测(参数来自Canny算子属性页),然后再用计算霍夫线变换

double lowThresh = double.Parse(txtCannyLowThresh.Text);

double highThresh = double.Parse(txtCannyHighThresh.Text);

int apertureSize = int.Parse((string)cmbCannyApertureSize.SelectedItem);

Image<Gray, Byte> imageCanny = new Image<Gray, byte>(imageSourceGrayscale.Size);

CvInvoke.cvCanny(imageSourceGrayscale.Ptr, imageCanny.Ptr, lowThresh, highThresh, apertureSize);

Stopwatch sw = new Stopwatch();

sw.Start();

IntPtr ptrLines = CvInvoke.cvHoughLines2(imageCanny.Ptr, storage.Ptr, method, rho, theta, threshold, param1, param2);

Seq<LineSegment2D> linesSeq = null;

Seq<PointF> linesSeq2 = null;

if (method == HOUGH_TYPE.CV_HOUGH_PROBABILISTIC)

linesSeq = new Seq<LineSegment2D>(ptrLines, storage);

else

linesSeq2 = new Seq<PointF>(ptrLines, storage);

sw.Stop();

//显示

Image<Bgr, Byte> imageResult = imageSourceGrayscale.Convert<Bgr, Byte>();

if (linesSeq != null)

{

linesCount = linesSeq.Total;

foreach (LineSegment2D line in linesSeq)

{

imageResult.Draw(line, new Bgr(255d, 0d, 0d), 4);

sbResult.AppendFormat("{0}-{1},", line.P1, line.P2);

}

}

else

{

linesCount = linesSeq2.Total;

foreach (PointF line in linesSeq2)

{

float r = line.X;

float t = line.Y;

double a = Math.Cos(t), b = Math.Sin(t);

double x0 = a * r, y0 = b * r;

int x1 = (int)(x0 + 1000 * (-b));

int y1 = (int)(y0 + 1000 * (a));

int x2 = (int)(x0 - 1000 * (-b));

int y2 = (int)(y0 - 1000 * (a));

Point pt1 = new Point(x1, y1);

Point pt2 = new Point(x2, y2);

imageResult.Draw(new LineSegment2D(pt1, pt2), new Bgr(255d, 0d, 0d), 4);

sbResult.AppendFormat("{0}-{1},", pt1, pt2);

}

}

pbResult.Image = imageResult.Bitmap;

//释放资源

imageCanny.Dispose();

imageResult.Dispose();

storage.Dispose();

//返回

return string.Format("·霍夫线变换,用时{0:F05}毫秒,参数(变换方式:{1},距离精度:{2},弧度精度:{3},阀值:{4},参数1:{5},参数2:{6}),找到{7}条直线\r\n{8}",

sw.Elapsed.TotalMilliseconds, method.ToString("G"), rho, theta, threshold, param1, param2, linesCount, linesCount != 0 ? (sbResult.ToString() + "\r\n") : "");

}

//霍夫圆变换

private string HoughCirclesFeatureDetect()

{

//获取参数

double dp = double.Parse(txtHoughCirclesDp.Text);

double minDist = double.Parse(txtHoughCirclesMinDist.Text);

double param1 = double.Parse(txtHoughCirclesParam1.Text);

double param2 = double.Parse(txtHoughCirclesParam2.Text);

int minRadius = int.Parse(txtHoughCirclesMinRadius.Text);

int maxRadius = int.Parse(txtHoughCirclesMaxRadius.Text);

StringBuilder sbResult = new StringBuilder();

//计算

Stopwatch sw = new Stopwatch();

sw.Start();

CircleF[][] circles = imageSourceGrayscale.HoughCircles(new Gray(param1), new Gray(param2), dp, minDist, minRadius, maxRadius);

sw.Stop();

//显示

Image<Bgr, Byte> imageResult = imageSourceGrayscale.Convert<Bgr, Byte>();

int circlesCount = 0;

foreach (CircleF[] cs in circles)

{

foreach (CircleF circle in cs)

{

imageResult.Draw(circle, new Bgr(255d, 0d, 0d), 4);

sbResult.AppendFormat("圆心{0}半径{1},", circle.Center, circle.Radius);

circlesCount++;

}

}

pbResult.Image = imageResult.Bitmap;

//释放资源

imageResult.Dispose();

//返回

return string.Format("·霍夫圆变换,用时{0:F05}毫秒,参数(累加器图像的最小分辨率:{1},不同圆之间的最小距离:{2},边缘阀值:{3},累加器阀值:{4},最小圆半径:{5},最大圆半径:{6}),找到{7}个圆\r\n{8}",

sw.Elapsed.TotalMilliseconds, dp, minDist, param1, param2, minRadius, maxRadius, circlesCount, sbResult.Length > 0 ? (sbResult.ToString() + "\r\n") : "");

}Harris角点

cvCornerHarris函数检测的结果实际上是一幅包含Harris角点的浮点型单通道图像,可以使用类似下面的代码来计算包含Harris角点的图像:

//Harris角点

private string CornerHarrisFeatureDetect()

{

//获取参数

int blockSize = int.Parse(txtCornerHarrisBlockSize.Text);

int apertureSize = int.Parse(txtCornerHarrisApertureSize.Text);

double k = double.Parse(txtCornerHarrisK.Text);

//计算

Image<Gray, Single> imageDest = new Image<Gray, float>(imageSourceGrayscale.Size);

Stopwatch sw = new Stopwatch();

sw.Start();

CvInvoke.cvCornerHarris(imageSourceGrayscale.Ptr, imageDest.Ptr, blockSize, apertureSize, k);

sw.Stop();

//显示

pbResult.Image = imageDest.Bitmap;

//释放资源

imageDest.Dispose();

//返回

return string.Format("·Harris角点,用时{0:F05}毫秒,参数(邻域大小:{1},方形滤波器宽度:{2},权重系数:{3})\r\n", sw.Elapsed.TotalMilliseconds, blockSize, apertureSize, k);

}如果要计算Harris角点列表,需要使用cvGoodFeatureToTrack函数,并传递适当的参数。

ShiTomasi角点

在默认情况下,cvGoodFeatureToTrack函数计算ShiTomasi角点;不过如果将参数use_harris设置为非0值,那么它会计算harris角点。

使用cvGoodFeatureToTrack函数的示例代码如下:

//ShiTomasi角点

private string CornerShiTomasiFeatureDetect()

{

//获取参数

int cornerCount = int.Parse(txtGoodFeaturesCornerCount.Text);

double qualityLevel = double.Parse(txtGoodFeaturesQualityLevel.Text);

double minDistance = double.Parse(txtGoodFeaturesMinDistance.Text);

int blockSize = int.Parse(txtGoodFeaturesBlockSize.Text);

bool useHarris = cbGoodFeaturesUseHarris.Checked;

double k = double.Parse(txtGoodFeaturesK.Text);

//计算

Stopwatch sw = new Stopwatch();

sw.Start();

PointF[][] corners = imageSourceGrayscale.GoodFeaturesToTrack(cornerCount, qualityLevel, minDistance, blockSize, useHarris, k);

sw.Stop();

//显示

Image<Bgr, Byte> imageResult = imageSourceGrayscale.Convert<Bgr, Byte>();

int cornerCount2 = 0;

StringBuilder sbResult = new StringBuilder();

int radius = (int)(minDistance / 2) + 1;

int thickness = (int)(minDistance / 4) + 1;

foreach (PointF[] cs in corners)

{

foreach (PointF p in cs)

{

imageResult.Draw(new CircleF(p, radius), new Bgr(255d, 0d, 0d), thickness);

cornerCount2++;

sbResult.AppendFormat("{0},", p);

}

}

pbResult.Image = imageResult.Bitmap;

//释放资源

imageResult.Dispose();

//返回

return string.Format("·ShiTomasi角点,用时{0:F05}毫秒,参数(最大角点数目:{1},最小特征值:{2},角点间的最小距离:{3},邻域大小:{4},角点类型:{5},权重系数:{6}),检测到{7}个角点\r\n{8}",

sw.Elapsed.TotalMilliseconds, cornerCount, qualityLevel, minDistance, blockSize, useHarris ? "Harris" : "ShiTomasi", k, cornerCount2, cornerCount2 > 0 ? (sbResult.ToString() + "\r\n") : "");

}亚像素级角点

在检测亚像素级角点前,需要提供角点的初始为止,这些初始位置可以用本文给出的其他的角点检测方式来获取,不过使用GoodFeaturesToTrack得到的结果最方便直接使用。

亚像素级角点检测的示例代码如下:

//亚像素级角点

private string CornerSubPixFeatureDetect()

{

//获取参数

int winWidth = int.Parse(txtCornerSubPixWinWidth.Text);

int winHeight = int.Parse(txtCornerSubPixWinHeight.Text);

Size win = new Size(winWidth, winHeight);

int zeroZoneWidth = int.Parse(txtCornerSubPixZeroZoneWidth.Text);

int zeroZoneHeight = int.Parse(txtCornerSubPixZeroZoneHeight.Text);

Size zeroZone = new Size(zeroZoneWidth, zeroZoneHeight);

int maxIter=int.Parse(txtCornerSubPixMaxIter.Text);

double epsilon=double.Parse(txtCornerSubPixEpsilon.Text);

MCvTermCriteria criteria = new MCvTermCriteria(maxIter, epsilon);

//先计算得到易于跟踪的点(ShiTomasi角点)

int cornerCount = int.Parse(txtGoodFeaturesCornerCount.Text);

double qualityLevel = double.Parse(txtGoodFeaturesQualityLevel.Text);

double minDistance = double.Parse(txtGoodFeaturesMinDistance.Text);

int blockSize = int.Parse(txtGoodFeaturesBlockSize.Text);

bool useHarris = cbGoodFeaturesUseHarris.Checked;

double k = double.Parse(txtGoodFeaturesK.Text);

PointF[][] corners = imageSourceGrayscale.GoodFeaturesToTrack(cornerCount, qualityLevel, minDistance, blockSize, useHarris, k);

//计算

Stopwatch sw = new Stopwatch();

sw.Start();

imageSourceGrayscale.FindCornerSubPix(corners, win, zeroZone, criteria);

sw.Stop();

//显示

Image<Bgr, Byte> imageResult = imageSourceGrayscale.Convert<Bgr, Byte>();

int cornerCount2 = 0;

StringBuilder sbResult = new StringBuilder();

int radius = (int)(minDistance / 2) + 1;

int thickness = (int)(minDistance / 4) + 1;

foreach (PointF[] cs in corners)

{

foreach (PointF p in cs)

{

imageResult.Draw(new CircleF(p, radius), new Bgr(255d, 0d, 0d), thickness);

cornerCount2++;

sbResult.AppendFormat("{0},", p);

}

}

pbResult.Image = imageResult.Bitmap;

//释放资源

imageResult.Dispose();

//返回

return string.Format("·亚像素级角点,用时{0:F05}毫秒,参数(搜索窗口:{1},死区:{2},最大迭代次数:{3},亚像素值的精度:{4}),检测到{5}个角点\r\n{6}",

sw.Elapsed.TotalMilliseconds, win, zeroZone, maxIter, epsilon, cornerCount2, cornerCount2 > 0 ? (sbResult.ToString() + "\r\n") : "");

}SURF角点

OpenCv中的cvExtractSURF函数和EmguCv中的Image<TColor,TDepth>.ExtractSURF方法用于检测SURF角点。

SURF角点检测的示例代码如下:

//SURF角点

private string SurfFeatureDetect()

{

//获取参数

bool getDescriptors = cbSurfGetDescriptors.Checked;

MCvSURFParams surfParam = new MCvSURFParams();

surfParam.extended=rbSurfBasicDescriptor.Checked ? 0 : 1;

surfParam.hessianThreshold=double.Parse(txtSurfHessianThreshold.Text);

surfParam.nOctaves=int.Parse(txtSurfNumberOfOctaves.Text);

surfParam.nOctaveLayers=int.Parse(txtSurfNumberOfOctaveLayers.Text);

//计算

SURFFeature[] features = null;

MKeyPoint[] keyPoints = null;

Stopwatch sw = new Stopwatch();

sw.Start();

if (getDescriptors)

features = imageSourceGrayscale.ExtractSURF(ref surfParam);

else

keyPoints = surfParam.DetectKeyPoints(imageSourceGrayscale, null);

sw.Stop();

//显示

bool showDetail = cbSurfShowDetail.Checked;

Image<Bgr, Byte> imageResult = imageSourceGrayscale.Convert<Bgr, Byte>();

StringBuilder sbResult = new StringBuilder();

int idx = 0;

if (getDescriptors)

{

foreach (SURFFeature feature in features)

{

imageResult.Draw(new CircleF(feature.Point.pt, 5), new Bgr(255d, 0d, 0d), 2);

if (showDetail)

{

sbResult.AppendFormat("第{0}点(坐标:{1},尺寸:{2},方向:{3}°,hessian值:{4},拉普拉斯标志:{5},描述:[",

idx, feature.Point.pt, feature.Point.size, feature.Point.dir, feature.Point.hessian, feature.Point.laplacian);

foreach (float d in feature.Descriptor)

sbResult.AppendFormat("{0},", d);

sbResult.Append("]),");

}

idx++;

}

}

else

{

foreach (MKeyPoint keypoint in keyPoints)

{

imageResult.Draw(new CircleF(keypoint.Point, 5), new Bgr(255d, 0d, 0d), 2);

if (showDetail)

sbResult.AppendFormat("第{0}点(坐标:{1},尺寸:{2},方向:{3}°,响应:{4},octave:{5}),",

idx, keypoint.Point, keypoint.Size, keypoint.Angle, keypoint.Response, keypoint.Octave);

idx++;

}

}

pbResult.Image = imageResult.Bitmap;

//释放资源

imageResult.Dispose();

//返回

return string.Format("·SURF角点,用时{0:F05}毫秒,参数(描述:{1},hessian阀值:{2},octave数目:{3},每个octave的层数:{4},检测到{5}个角点\r\n{6}",

sw.Elapsed.TotalMilliseconds, getDescriptors ? (surfParam.extended == 0 ? "获取基本描述" : "获取扩展描述") : "不获取描述", surfParam.hessianThreshold,

surfParam.nOctaves, surfParam.nOctaveLayers, getDescriptors ? features.Length : keyPoints.Length, showDetail ? sbResult.ToString() + "\r\n" : "");

}Star关键点

OpenCv中的cvGetStarKeypoints函数和EmguCv中的Image<TColor,TDepth>.GetStarKeypoints方法用于检测“星型”附近的点。

Star关键点检测的示例代码如下:

//Star关键点

private string StarKeyPointFeatureDetect()

{

//获取参数

StarDetector starParam = new StarDetector();

starParam.MaxSize = int.Parse((string)cmbStarMaxSize.SelectedItem);

starParam.ResponseThreshold = int.Parse(txtStarResponseThreshold.Text);

starParam.LineThresholdProjected = int.Parse(txtStarLineThresholdProjected.Text);

starParam.LineThresholdBinarized = int.Parse(txtStarLineThresholdBinarized.Text);

starParam.SuppressNonmaxSize = int.Parse(txtStarSuppressNonmaxSize.Text);

//计算

Stopwatch sw = new Stopwatch();

sw.Start();

MCvStarKeypoint[] keyPoints = imageSourceGrayscale.GetStarKeypoints(ref starParam);

sw.Stop();

//显示

Image<Bgr, Byte> imageResult = imageSourceGrayscale.Convert<Bgr, Byte>();

StringBuilder sbResult = new StringBuilder();

int idx = 0;

foreach (MCvStarKeypoint keypoint in keyPoints)

{

imageResult.Draw(new CircleF(new PointF(keypoint.pt.X, keypoint.pt.Y), keypoint.size / 2), new Bgr(255d, 0d, 0d), keypoint.size / 4);

sbResult.AppendFormat("第{0}点(坐标:{1},尺寸:{2},强度:{3}),", idx, keypoint.pt, keypoint.size, keypoint.response);

idx++;

}

pbResult.Image = imageResult.Bitmap;

//释放资源

imageResult.Dispose();

//返回

return string.Format("·Star关键点,用时{0:F05}毫秒,参数(MaxSize:{1},ResponseThreshold:{2},LineThresholdProjected:{3},LineThresholdBinarized:{4},SuppressNonmaxSize:{5}),检测到{6}个关键点\r\n{7}",

sw.Elapsed.TotalMilliseconds, starParam.MaxSize, starParam.ResponseThreshold, starParam.LineThresholdProjected, starParam.LineThresholdBinarized, starParam.SuppressNonmaxSize, keyPoints.Length, keyPoints.Length > 0 ? (sbResult.ToString() + "\r\n") : "");

}FAST角点检测

FAST角点由E. Rosten教授提出,相比其他检测手段,这种方法的速度正如其名,相当的快。值得关注的是他所研究的理论都是属于实用类的,都很快。Rosten教授实现了FAST角点检测,并将其提供给了OpenCv,相当的有爱呀;不过OpenCv中的函数和Rosten教授的实现似乎有点点不太一样。遗憾的是,OpenCv中目前还没有FAST角点检测的文档。下面是我从Rosten的代码中找到的函数声明,可以看到粗略的参数说明。

/*

The references are:

* Machine learning for high-speed corner detection,

E. Rosten and T. Drummond, ECCV 2006

* Faster and better: A machine learning approach to corner detection

E. Rosten, R. Porter and T. Drummond, PAMI, 2009

*/

void cvCornerFast( const CvArr* image, int threshold, int N,

int nonmax_suppression, int* ret_number_of_corners,

CvPoint** ret_corners);

image: OpenCV image in which to detect corners. Must be 8 bit unsigned.

threshold: Threshold for detection (higher is fewer corners). 0--255

N: Arc length of detector, 9, 10, 11 or 12. 9 is usually best.

nonmax_suppression: Whether to perform nonmaximal suppression.

ret_number_of_corners: The number of detected corners is returned here.

ret_corners: The corners are returned here.

EmguCv中的Image<TColor,TDepth>.GetFASTKeypoints方法也实现了FAST角点检测,不过参数少了一些,只有threshold和nonmaxSupression,其中N我估计取的默认值9,但是返回的角点数目我不知道是怎么设置的。

使用FAST角点检测的示例代码如下:

//FAST关键点

private string FASTKeyPointFeatureDetect()

{

//获取参数

int threshold = int.Parse(txtFASTThreshold.Text);

bool nonmaxSuppression = cbFASTNonmaxSuppression.Checked;

bool showDetail = cbFASTShowDetail.Checked;

//计算

Stopwatch sw = new Stopwatch();

sw.Start();

MKeyPoint[] keyPoints = imageSourceGrayscale.GetFASTKeypoints(threshold, nonmaxSuppression);

sw.Stop();

//显示

Image<Bgr, Byte> imageResult = imageSourceGrayscale.Convert<Bgr, Byte>();

StringBuilder sbResult = new StringBuilder();

int idx = 0;

foreach (MKeyPoint keypoint in keyPoints)

{

imageResult.Draw(new CircleF(keypoint.Point, (int)(keypoint.Size / 2)), new Bgr(255d, 0d, 0d), (int)(keypoint.Size / 4));

if (showDetail)

sbResult.AppendFormat("第{0}点(坐标:{1},尺寸:{2},方向:{3}°,响应:{4},octave:{5}),",

idx, keypoint.Point, keypoint.Size, keypoint.Angle, keypoint.Response, keypoint.Octave);

idx++;

}

pbResult.Image = imageResult.Bitmap;

//释放资源

imageResult.Dispose();

//返回

return string.Format("·FAST关键点,用时{0:F05}毫秒,参数(阀值:{1},nonmaxSupression:{2}),检测到{3}个关键点\r\n{4}",

sw.Elapsed.TotalMilliseconds, threshold, nonmaxSuppression, keyPoints.Length, showDetail ? (sbResult.ToString() + "\r\n") : "");

}Lepetit关键点

Lepetit关键点由Vincent Lepetit提出,可以在他的网站(http://cvlab.epfl.ch/~vlepetit/)上看到相关的论文等资料。EmguCv中的类LDetector实现了Lepetit关键点的检测。

使用Lepetit关键点检测的示例代码如下:

//Lepetit关键点

private string LepetitKeyPointFeatureDetect()

{

//获取参数

LDetector lepetitDetector = new LDetector();

lepetitDetector.BaseFeatureSize = double.Parse(txtLepetitBaseFeatureSize.Text);

lepetitDetector.ClusteringDistance = double.Parse(txtLepetitClasteringDistance.Text);

lepetitDetector.NOctaves = int.Parse(txtLepetitNumberOfOctaves.Text);

lepetitDetector.NViews = int.Parse(txtLepetitNumberOfViews.Text);

lepetitDetector.Radius = int.Parse(txtLepetitRadius.Text);

lepetitDetector.Threshold = int.Parse(txtLepetitThreshold.Text);

lepetitDetector.Verbose = cbLepetitVerbose.Checked;

int maxCount = int.Parse(txtLepetitMaxCount.Text);

bool scaleCoords = cbLepetitScaleCoords.Checked;

bool showDetail = cbLepetitShowDetail.Checked;

//计算

Stopwatch sw = new Stopwatch();

sw.Start();

MKeyPoint[] keyPoints = lepetitDetector.DetectKeyPoints(imageSourceGrayscale, maxCount, scaleCoords);

sw.Stop();

//显示

Image<Bgr, Byte> imageResult = imageSourceGrayscale.Convert<Bgr, Byte>();

StringBuilder sbResult = new StringBuilder();

int idx = 0;

foreach (MKeyPoint keypoint in keyPoints)

{

//imageResult.Draw(new CircleF(keypoint.Point, (int)(keypoint.Size / 2)), new Bgr(255d, 0d, 0d), (int)(keypoint.Size / 4));

imageResult.Draw(new CircleF(keypoint.Point, 4), new Bgr(255d, 0d, 0d), 2);

if (showDetail)

sbResult.AppendFormat("第{0}点(坐标:{1},尺寸:{2},方向:{3}°,响应:{4},octave:{5}),",

idx, keypoint.Point, keypoint.Size, keypoint.Angle, keypoint.Response, keypoint.Octave);

idx++;

}

pbResult.Image = imageResult.Bitmap;

//释放资源

imageResult.Dispose();

//返回

return string.Format("·Lepetit关键点,用时{0:F05}毫秒,参数(基础特征尺寸:{1},集群距离:{2},阶数:{3},视图数:{4},半径:{5},阀值:{6},计算详细结果:{7},最大关键点数目:{8},缩放坐标:{9}),检测到{10}个关键点\r\n{11}",

sw.Elapsed.TotalMilliseconds, lepetitDetector.BaseFeatureSize, lepetitDetector.ClusteringDistance, lepetitDetector.NOctaves, lepetitDetector.NViews,

lepetitDetector.Radius, lepetitDetector.Threshold, lepetitDetector.Verbose, maxCount, scaleCoords, keyPoints.Length, showDetail ? (sbResult.ToString() + "\r\n") : "");

}SIFT角点

SIFT角点是一种广泛使用的图像特征,可用于物体跟踪、图像匹配、图像拼接等领域,然而奇怪的是它并未被OpenCv实现。提出SIFT角点的David Lowe教授已经用C和matlab实现了SIFT角点的检测,并开放了源代码,不过他的实现不方便直接使用。您可以在http://www.cs.ubc.ca/~lowe/keypoints/看到SIFT的介绍、相关论文及David Lowe教授的实现代码。下面我要介绍由Andrea Vedaldi和Brian Fulkerson先生创建的vlfeat开源图像处理库,vlfeat库有C和matlab两种实现,其中包含了SIFT检测。您可以在http://www.vlfeat.org/下载到vlfeat库的代码、文档及可执行文件。

使用vlfeat检测SIFT角点需要以下步骤:

(1)用函数vl_sift_new()初始化SIFT过滤器对象,该过滤器对象可以反复用于多幅尺寸相同的图像;

(2)用函数vl_sift_first_octave()及vl_sift_process_next()遍历缩放空间的每一阶,直到返回VL_ERR_EOF为止;

(3)对于缩放空间的每一阶,用函数vl_sift_detect()来获取关键点;

(4)对每个关键点,用函数vl_sift_calc_keypoint_orientations()来获取该点的方向;

(5)对关键点的每个方向,用函数vl_sift_calc_keypoint_descriptor()来获取该方向的描述;

(6)使用完之后,用函数vl_sift_delete()来释放资源;

(7)如果要计算某个自定义关键点的描述,可以使用函数vl_sift_calc_raw_descriptor()。

直接使用vlfeat中的SIFT角点检测示例代码如下:

//通过P/Invoke调用vlfeat函数来进行SIFT检测

unsafe private string SiftFeatureDetectByPinvoke(int noctaves, int nlevels, int o_min, bool showDetail)

{

StringBuilder sbResult = new StringBuilder();

//初始化

IntPtr ptrSiftFilt = VlFeatInvoke.vl_sift_new(imageSource.Width, imageSource.Height, noctaves, nlevels, o_min);

if (ptrSiftFilt == IntPtr.Zero)

return "Sift特征检测:初始化失败。";

//处理

Image<Gray, Single> imageSourceSingle = imageSourceGrayscale.ConvertScale<Single>(1d, 0d);

Image<Bgr, Byte> imageResult = imageSourceGrayscale.Convert<Bgr, Byte>();

int pointCount = 0;

int idx = 0;

//依次遍历每一组

if (VlFeatInvoke.vl_sift_process_first_octave(ptrSiftFilt, imageSourceSingle.MIplImage.imageData) != VlFeatInvoke.VL_ERR_EOF)

{

while (true)

{

//计算每组中的关键点

VlFeatInvoke.vl_sift_detect(ptrSiftFilt);

//遍历并绘制每个点

VlSiftFilt siftFilt = (VlSiftFilt)Marshal.PtrToStructure(ptrSiftFilt, typeof(VlSiftFilt));

pointCount += siftFilt.nkeys;

VlSiftKeypoint* pKeyPoints = (VlSiftKeypoint*)siftFilt.keys.ToPointer();

for (int i = 0; i < siftFilt.nkeys; i++)

{

VlSiftKeypoint keyPoint = *pKeyPoints;

pKeyPoints++;

imageResult.Draw(new CircleF(new PointF(keyPoint.x, keyPoint.y), keyPoint.sigma / 2), new Bgr(255d, 0d, 0d), 2);

if (showDetail)

sbResult.AppendFormat("第{0}点,坐标:({1},{2}),阶:{3},缩放:{4},s:{5},", idx, keyPoint.x, keyPoint.y, keyPoint.o, keyPoint.sigma, keyPoint.s);

idx++;

//计算并遍历每个点的方向

double[] angles = new double[4];

int angleCount = VlFeatInvoke.vl_sift_calc_keypoint_orientations(ptrSiftFilt, angles, ref keyPoint);

if (showDetail)

sbResult.AppendFormat("共{0}个方向,", angleCount);

for (int j = 0; j < angleCount; j++)

{

double angle = angles[j];

if (showDetail)

sbResult.AppendFormat("【方向:{0},描述:", angle);

//计算每个方向的描述

IntPtr ptrDescriptors = Marshal.AllocHGlobal(128 * sizeof(float));

VlFeatInvoke.vl_sift_calc_keypoint_descriptor(ptrSiftFilt, ptrDescriptors, ref keyPoint, angle);

float* pDescriptors = (float*)ptrDescriptors.ToPointer();

for (int k = 0; k < 128; k++)

{

float descriptor = *pDescriptors;

pDescriptors++;

if (showDetail)

sbResult.AppendFormat("{0},", descriptor);

}

sbResult.Append("】,");

Marshal.FreeHGlobal(ptrDescriptors);

}

}

//下一阶

if (VlFeatInvoke.vl_sift_process_next_octave(ptrSiftFilt) == VlFeatInvoke.VL_ERR_EOF)

break;

}

}

//显示

pbResult.Image = imageResult.Bitmap;

//释放资源

VlFeatInvoke.vl_sift_delete(ptrSiftFilt);

imageSourceSingle.Dispose();

imageResult.Dispose();

//返回

return string.Format("·SIFT特征检测(P/Invoke),用时:未统计,参数(阶数:{0},每阶层数:{1},最小阶索引:{2}),{3}个关键点\r\n{4}",

noctaves, nlevels, o_min, pointCount, showDetail ? (sbResult.ToString() + "\r\n") : "");

}要在.net中使用vlfeat还是不够方便,为此我对vlfeat中的SIFT角点检测部分进行了封装,将相关操作放到了类SiftDetector中。

使用SiftDetector需要两至三步:

(1)用构造函数初始化SiftDetector对象;

(2)用Process方法计算特征;

(3)视需要调用Dispose方法释放资源,或者等待垃圾回收器来自动释放资源。

使用SiftDetector的示例代码如下:

//通过dotnet封装的SiftDetector类来进行SIFT检测

private string SiftFeatureDetectByDotNet(int noctaves, int nlevels, int o_min, bool showDetail)

{

//初始化对象

SiftDetector siftDetector = new SiftDetector(imageSource.Size, noctaves, nlevels, o_min);

//计算

Image<Gray, Single> imageSourceSingle = imageSourceGrayscale.Convert<Gray, Single>();

Stopwatch sw = new Stopwatch();

sw.Start();

List<SiftFeature> features = siftDetector.Process(imageSourceSingle, showDetail ? SiftDetectorResultType.Extended : SiftDetectorResultType.Basic);

sw.Stop();

//显示结果

Image<Bgr, Byte> imageResult = imageSourceGrayscale.Convert<Bgr, Byte>();

StringBuilder sbResult = new StringBuilder();

int idx=0;

foreach (SiftFeature feature in features)

{

imageResult.Draw(new CircleF(new PointF(feature.keypoint.x, feature.keypoint.y), feature.keypoint.sigma / 2), new Bgr(255d, 0d, 0d), 2);

if (showDetail)

{

sbResult.AppendFormat("第{0}点,坐标:({1},{2}),阶:{3},缩放:{4},s:{5},",

idx, feature.keypoint.x, feature.keypoint.y, feature.keypoint.o, feature.keypoint.sigma, feature.keypoint.s);

sbResult.AppendFormat("共{0}个方向,", feature.keypointOrientations != null ? feature.keypointOrientations.Length : 0);

if (feature.keypointOrientations != null)

{

foreach (SiftKeyPointOrientation orientation in feature.keypointOrientations)

{

if (orientation.descriptors != null)

{

sbResult.AppendFormat("【方向:{0},描述:", orientation.angle);

foreach (float descriptor in orientation.descriptors)

sbResult.AppendFormat("{0},", descriptor);

}

else

sbResult.AppendFormat("【方向:{0},", orientation.angle);

sbResult.Append("】,");

}

}

}

}

pbResult.Image = imageResult.Bitmap;

//释放资源

siftDetector.Dispose();

imageSourceSingle.Dispose();

imageResult.Dispose();

//返回

return string.Format("·SIFT特征检测(.net),用时:{0:F05}毫秒,参数(阶数:{1},每阶层数:{2},最小阶索引:{3}),{4}个关键点\r\n{5}",

sw.Elapsed.TotalMilliseconds, noctaves, nlevels, o_min, features.Count, showDetail ? (sbResult.ToString() + "\r\n") : "");

}对vlfeat库中的SIFT部分封装代码如下所示:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using System.Runtime.InteropServices;

namespace ImageProcessLearn

{

[StructLayoutAttribute(LayoutKind.Sequential)]

public struct VlSiftKeypoint

{

/// int

public int o;

/// int

public int ix;

/// int

public int iy;

/// int

public int @is;

/// float

public float x;

/// float

public float y;

/// float

public float s;

/// float

public float sigma;

}

[StructLayoutAttribute(LayoutKind.Sequential)]

public struct VlSiftFilt

{

/// double

public double sigman;

/// double

public double sigma0;

/// double

public double sigmak;

/// double

public double dsigma0;

/// int

public int width;

/// int

public int height;

/// int

public int O;

/// int

public int S;

/// int

public int o_min;

/// int

public int s_min;

/// int

public int s_max;

/// int

public int o_cur;

/// vl_sift_pix*

public System.IntPtr temp;

/// vl_sift_pix*

public System.IntPtr octave;

/// vl_sift_pix*

public System.IntPtr dog;

/// int

public int octave_width;

/// int

public int octave_height;

/// VlSiftKeypoint*

public System.IntPtr keys;

/// int

public int nkeys;

/// int

public int keys_res;

/// double

public double peak_thresh;

/// double

public double edge_thresh;

/// double

public double norm_thresh;

/// double

public double magnif;

/// double

public double windowSize;

/// vl_sift_pix*

public System.IntPtr grad;

/// int

public int grad_o;

/// <summary>

/// 获取SiftFilt指针;

/// 注意在使用完指针之后,需要用Marshal.FreeHGlobal释放内存。

/// </summary>

/// <returns></returns>

unsafe public IntPtr GetPtrOfVlSiftFilt()

{

IntPtr ptrSiftFilt = Marshal.AllocHGlobal(sizeof(VlSiftFilt));

Marshal.StructureToPtr(this, ptrSiftFilt, true);

return ptrSiftFilt;

}

}

public class VlFeatInvoke

{

/// VL_ERR_MSG_LEN -> 1024

public const int VL_ERR_MSG_LEN = 1024;

/// VL_ERR_OK -> 0

public const int VL_ERR_OK = 0;

/// VL_ERR_OVERFLOW -> 1

public const int VL_ERR_OVERFLOW = 1;

/// VL_ERR_ALLOC -> 2

public const int VL_ERR_ALLOC = 2;

/// VL_ERR_BAD_ARG -> 3

public const int VL_ERR_BAD_ARG = 3;

/// VL_ERR_IO -> 4

public const int VL_ERR_IO = 4;

/// VL_ERR_EOF -> 5

public const int VL_ERR_EOF = 5;

/// VL_ERR_NO_MORE -> 5

public const int VL_ERR_NO_MORE = 5;

/// Return Type: VlSiftFilt*

/// int

///height: int

///noctaves: int

///nlevels: int

///o_min: int

[DllImportAttribute("vl.dll", EntryPoint = "vl_sift_new")]

public static extern System.IntPtr vl_sift_new(int width, int height, int noctaves, int nlevels, int o_min);

/// Return Type: void

///f: VlSiftFilt*

[DllImportAttribute("vl.dll", EntryPoint = "vl_sift_delete")]

public static extern void vl_sift_delete(IntPtr f);

/// Return Type: int

///f: VlSiftFilt*

///im: vl_sift_pix*

[DllImportAttribute("vl.dll", EntryPoint = "vl_sift_process_first_octave")]

public static extern int vl_sift_process_first_octave(IntPtr f, IntPtr im);

/// Return Type: int

///f: VlSiftFilt*

[DllImportAttribute("vl.dll", EntryPoint = "vl_sift_process_next_octave")]

public static extern int vl_sift_process_next_octave(IntPtr f);

/// Return Type: void

///f: VlSiftFilt*

[DllImportAttribute("vl.dll", EntryPoint = "vl_sift_detect")]

public static extern void vl_sift_detect(IntPtr f);

/// Return Type: int

///f: VlSiftFilt*

///angles: double*

///k: VlSiftKeypoint*

[DllImportAttribute("vl.dll", EntryPoint = "vl_sift_calc_keypoint_orientations")]

public static extern int vl_sift_calc_keypoint_orientations(IntPtr f, double[] angles, ref VlSiftKeypoint k);

/// Return Type: void

///f: VlSiftFilt*

///descr: vl_sift_pix*

///k: VlSiftKeypoint*

///angle: double

[DllImportAttribute("vl.dll", EntryPoint = "vl_sift_calc_keypoint_descriptor")]

public static extern void vl_sift_calc_keypoint_descriptor(IntPtr f, IntPtr descr, ref VlSiftKeypoint k, double angle);

/// Return Type: void

///f: VlSiftFilt*

///image: vl_sift_pix*

///descr: vl_sift_pix*

///widht: int

///height: int

///x: double

///y: double

///s: double

///angle0: double

[DllImportAttribute("vl.dll", EntryPoint = "vl_sift_calc_raw_descriptor")]

public static extern void vl_sift_calc_raw_descriptor(IntPtr f, IntPtr image, IntPtr descr, int widht, int height, double x, double y, double s, double angle0);

/// Return Type: void

///f: VlSiftFilt*

///k: VlSiftKeypoint*

///x: double

///y: double

///sigma: double

[DllImportAttribute("vl.dll", EntryPoint = "vl_sift_keypoint_init")]

public static extern void vl_sift_keypoint_init(IntPtr f, ref VlSiftKeypoint k, double x, double y, double sigma);

}

}

SiftDetector类的实现代码如下所示:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using System.Drawing;

using System.Runtime.InteropServices;

using Emgu.CV;

using Emgu.CV.Structure;

namespace ImageProcessLearn

{

/// <summary>

/// SIFT检测器

/// </summary>

public class SiftDetector : IDisposable

{

//成员变量

private IntPtr ptrSiftFilt;

//属性

/// <summary>

/// SiftFilt指针

/// </summary>

public IntPtr PtrSiftFilt

{

get

{

return ptrSiftFilt;

}

}

/// <summary>

/// 获取SIFT检测器中的SiftFilt

/// </summary>

public VlSiftFilt SiftFilt

{

get

{

return (VlSiftFilt)Marshal.PtrToStructure(ptrSiftFilt, typeof(VlSiftFilt));

}

}

/// <summary>

/// 构造函数

/// </summary>

/// <param name="width">图像的宽度</param>

/// <param name="height">图像的高度</param>

/// <param name="noctaves">阶数</param>

/// <param name="nlevels">每一阶的层数</param>

/// <param name="o_min">最小阶的索引</param>

public SiftDetector(int width, int height, int noctaves, int nlevels, int o_min)

{

ptrSiftFilt = VlFeatInvoke.vl_sift_new(width, height, noctaves, nlevels, o_min);

}

public SiftDetector(int width, int height)

: this(width, height, 4, 2, 0)

{ }

public SiftDetector(Size size, int noctaves, int nlevels, int o_min)

: this(size.Width, size.Height, noctaves, nlevels, o_min)

{ }

public SiftDetector(Size size)

: this(size.Width, size.Height, 4, 2, 0)

{ }

/// <summary>

/// 进行SIFT检测,并返回检测的结果

/// </summary>

/// <param name="im">单通道浮点型图像数据,图像数据不必归一化到区间[0,1]</param>

/// <param name="resultType">SIFT检测的结果类型</param>

/// <returns>返回SIFT检测结果——SIFT特征列表;如果检测失败,返回null。</returns>

unsafe public List<SiftFeature> Process(IntPtr im, SiftDetectorResultType resultType)

{

//定义变量

List<SiftFeature> features = null; //检测结果:SIFT特征列表

VlSiftFilt siftFilt; //

VlSiftKeypoint* pKeyPoints; //指向关键点的指针

VlSiftKeypoint keyPoint; //关键点

SiftKeyPointOrientation[] orientations; //关键点对应的方向及描述

double[] angles = new double[4]; //关键点对应的方向(角度)

int angleCount; //某个关键点的方向数目

double angle; //方向

float[] descriptors; //关键点某个方向的描述

IntPtr ptrDescriptors = Marshal.AllocHGlobal(128 * sizeof(float)); //指向描述的缓冲区指针

//依次遍历每一阶

if (VlFeatInvoke.vl_sift_process_first_octave(ptrSiftFilt, im) != VlFeatInvoke.VL_ERR_EOF)

{

features = new List<SiftFeature>(100);

while (true)

{

//计算每组中的关键点

VlFeatInvoke.vl_sift_detect(ptrSiftFilt);

//遍历每个点

siftFilt = (VlSiftFilt)Marshal.PtrToStructure(ptrSiftFilt, typeof(VlSiftFilt));

pKeyPoints = (VlSiftKeypoint*)siftFilt.keys.ToPointer();

for (int i = 0; i < siftFilt.nkeys; i++)

{

keyPoint = *pKeyPoints;

pKeyPoints++;

orientations = null;

if (resultType == SiftDetectorResultType.Normal || resultType == SiftDetectorResultType.Extended)

{

//计算并遍历每个点的方向

angleCount = VlFeatInvoke.vl_sift_calc_keypoint_orientations(ptrSiftFilt, angles, ref keyPoint);

orientations = new SiftKeyPointOrientation[angleCount];

for (int j = 0; j < angleCount; j++)

{

angle = angles[j];

descriptors = null;

if (resultType == SiftDetectorResultType.Extended)

{

//计算每个方向的描述

VlFeatInvoke.vl_sift_calc_keypoint_descriptor(ptrSiftFilt, ptrDescriptors, ref keyPoint, angle);

descriptors = new float[128];

Marshal.Copy(ptrDescriptors, descriptors, 0, 128);

}

orientations[j] = new SiftKeyPointOrientation(angle, descriptors); //保存关键点方向和描述

}

}

features.Add(new SiftFeature(keyPoint, orientations)); //将得到的特征添加到列表中

}

//下一阶

if (VlFeatInvoke.vl_sift_process_next_octave(ptrSiftFilt) == VlFeatInvoke.VL_ERR_EOF)

break;

}

}

//释放资源

Marshal.FreeHGlobal(ptrDescriptors);

//返回

return features;

}

/// <summary>

/// 进行基本的SIFT检测,并返回关键点列表

/// </summary>

/// <param name="im">单通道浮点型图像数据,图像数据不必归一化到区间[0,1]</param>

/// <returns>返回关键点列表;如果获取失败,返回null。</returns>

public List<SiftFeature> Process(IntPtr im)

{

return Process(im, SiftDetectorResultType.Basic);

}

/// <summary>

/// 进行SIFT检测,并返回检测的结果

/// </summary>

/// <param name="image">图像</param>

/// <param name="resultType">SIFT检测的结果类型</param>

/// <returns>返回SIFT检测结果——SIFT特征列表;如果检测失败,返回null。</returns>

public List<SiftFeature> Process(Image<Gray, Single> image, SiftDetectorResultType resultType)

{

if (image.Width != SiftFilt.width || image.Height != SiftFilt.height)

throw new ArgumentException("图像的尺寸和构造函数中指定的尺寸不一致。", "image");

return Process(image.MIplImage.imageData, resultType);

}

/// <summary>

/// 进行基本的SIFT检测,并返回检测的结果

/// </summary>

/// <param name="image">图像</param>

/// <returns>返回SIFT检测结果——SIFT特征列表;如果检测失败,返回null。</returns>

public List<SiftFeature> Process(Image<Gray, Single> image)

{

return Process(image, SiftDetectorResultType.Basic);

}

/// <summary>

/// 释放资源

/// </summary>

public void Dispose()

{

if (ptrSiftFilt != IntPtr.Zero)

VlFeatInvoke.vl_sift_delete(ptrSiftFilt);

}

}

/// <summary>

/// SIFT特征

/// </summary>

public struct SiftFeature

{

public VlSiftKeypoint keypoint; //关键点

public SiftKeyPointOrientation[] keypointOrientations; //关键点的方向及方向对应的描述

public SiftFeature(VlSiftKeypoint keypoint)

: this(keypoint, null)

{

}

public SiftFeature(VlSiftKeypoint keypoint, SiftKeyPointOrientation[] keypointOrientations)

{

this.keypoint = keypoint;

this.keypointOrientations = keypointOrientations;

}

}

/// <summary>

/// Sift关键点的方向及描述

/// </summary>

public struct SiftKeyPointOrientation

{

public double angle; //方向

public float[] descriptors; //描述

public SiftKeyPointOrientation(double angle)

: this(angle, null)

{

}

public SiftKeyPointOrientation(double angle, float[] descriptors)

{

this.angle = angle;

this.descriptors = descriptors;

}

}

/// <summary>

/// SIFT检测的结果

/// </summary>

public enum SiftDetectorResultType

{

Basic, //基本:仅包含关键点

Normal, //正常:包含关键点、方向

Extended //扩展:包含关键点、方向以及描述

}

}MSER区域

OpenCv中的函数cvExtractMSER以及EmguCv中的Image<TColor,TDepth>.ExtractMSER方法实现了MSER区域的检测。由于OpenCv的文档中目前还没有cvExtractMSER这一部分,大家如果要看文档的话,可以先去看EmguCv的文档。

需要注意的是MSER区域的检测结果是区域中所有的点序列。例如检测到3个区域,其中一个区域是从(0,0)到(2,1)的矩形,那么结果点序列为:(0,0),(1,0),(2,0),(2,1),(1,1),(0,1)。

MSER区域检测的示例代码如下:

//MSER(区域)特征检测

private string MserFeatureDetect()

{

//获取参数

MCvMSERParams mserParam = new MCvMSERParams();

mserParam.delta = int.Parse(txtMserDelta.Text);

mserParam.maxArea = int.Parse(txtMserMaxArea.Text);

mserParam.minArea = int.Parse(txtMserMinArea.Text);

mserParam.maxVariation = float.Parse(txtMserMaxVariation.Text);

mserParam.minDiversity = float.Parse(txtMserMinDiversity.Text);

mserParam.maxEvolution = int.Parse(txtMserMaxEvolution.Text);

mserParam.areaThreshold = double.Parse(txtMserAreaThreshold.Text);

mserParam.minMargin = double.Parse(txtMserMinMargin.Text);

mserParam.edgeBlurSize = int.Parse(txtMserEdgeBlurSize.Text);

bool showDetail = cbMserShowDetail.Checked;

//计算

Stopwatch sw = new Stopwatch();

sw.Start();

MemStorage storage = new MemStorage();

Seq<Point>[] regions = imageSource.ExtractMSER(null, ref mserParam, storage);

sw.Stop();

//显示

Image<Bgr, Byte> imageResult = imageSourceGrayscale.Convert<Bgr, Byte>();

StringBuilder sbResult = new StringBuilder();

int idx = 0;

foreach (Seq<Point> region in regions)

{

imageResult.DrawPolyline(region.ToArray(), true, new Bgr(255d, 0d, 0d), 2);

if (showDetail)

{

sbResult.AppendFormat("第{0}区域,包含{1}个顶点(", idx, region.Total);

foreach (Point pt in region)

sbResult.AppendFormat("{0},", pt);

sbResult.Append(")\r\n");

}

idx++;

}

pbResult.Image = imageResult.Bitmap;

//释放资源

imageResult.Dispose();

storage.Dispose();

//返回

return string.Format("·MSER区域,用时{0:F05}毫秒,参数(delta:{1},maxArea:{2},minArea:{3},maxVariation:{4},minDiversity:{5},maxEvolution:{6},areaThreshold:{7},minMargin:{8},edgeBlurSize:{9}),检测到{10}个区域\r\n{11}",

sw.Elapsed.TotalMilliseconds, mserParam.delta, mserParam.maxArea, mserParam.minArea, mserParam.maxVariation, mserParam.minDiversity,

mserParam.maxEvolution, mserParam.areaThreshold, mserParam.minMargin, mserParam.edgeBlurSize, regions.Length, showDetail ? sbResult.ToString() : "");

}各种特征检测方法性能对比

上面介绍了这么多的特征检测方法,那么它们的性能到底如何呢?因为它们的参数设置对处理时间及结果的影响很大,我们在这里基本都使用默认参数处理同一幅图像。在我机器上的处理结果见下表:

特征用时(毫秒)特征数目

Sobel算子5.99420n/a

拉普拉斯算子3.13440n/a

Canny算子3.41160n/a

霍夫线变换13.7079010

霍夫圆变换78.077200

Harris角点9.41750n/a

ShiTomasi角点16.9839018

亚像素级角点3.6336018

SURF角点266.27000151

Star关键点14.8280056

FAST角点31.29670159

SIFT角点287.5231054

MSER区域40.629702

(图片尺寸:583x301,处理器:AMD ATHLON IIx2 240,内存:DDR3 4G,显卡:GeForce 9500GT,操作系统:Windows 7)

感谢您耐心看完本文,希望对您有所帮助。

下一篇文章我们将一起看看如何来跟踪本文讲到的特征点(角点)。

另外,如果需要本文的源代码,请点击这里下载。

[图像] 各种图像处理类库的比较及选择(The Comparison of Image Processing Libraries)

[特征] 图像特征检测(Image Feature Detection)

[图片相似度] 背景建模与前景检测之二(Background Generation And Foreground Detection

[图像] 图像相似度算法的C#实现及测评

[图像] 图像相似度计算

[图像] 将内存图像数据封装成QImage V2